Grade Level

6 - 8

minutes

15 minutes or fewer

subject

Engineering and Tech

01001000 01100101 01101100 01101100 01101111 00100001

Those ones and zeros might not look like anything to you, but in binary code the numbers are actually saying “Hello!”

Any code that uses just two symbols to represent information is considered binary code. Different versions of binary code have been around for centuries, and have been used in a variety of contexts. For example, Braille uses raised and un-raised bumps to convey information to the blind, Morse code uses long and short signals to transmit information, and the example above uses sets of 0s and 1s to represent letters. Perhaps the most common use for binary nowadays is in computers: binary code is the way that most computers and computerized devices ultimately send, receive, and store information.

Write Your Name in Binary Code in Lots of Ways

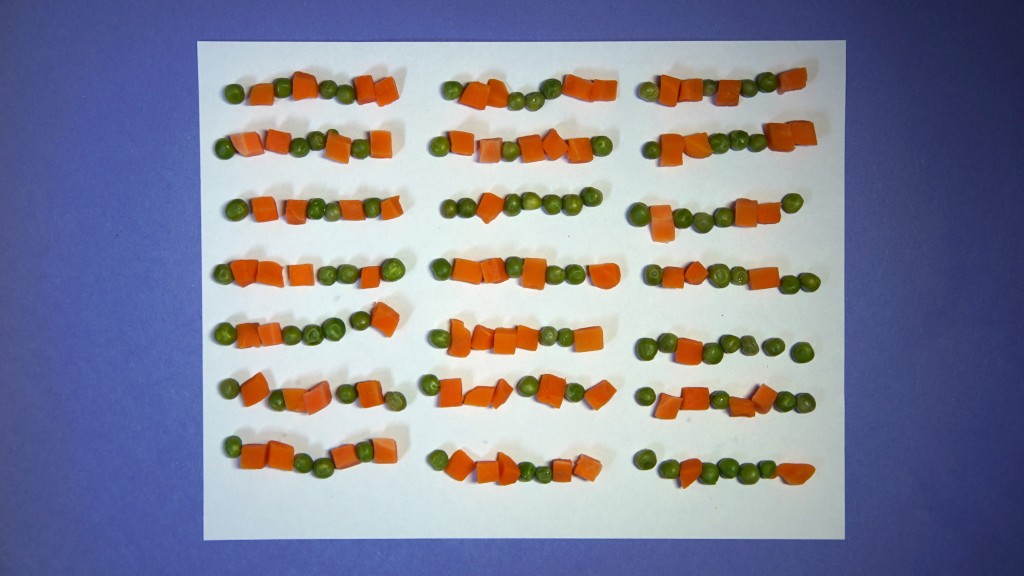

The 0s and 1s of binary code are somewhat arbitrary. Any symbol, color, or physical object that can exist in two different forms or states—such as a coin (heads and tails), a switch (on and off), color (blue and green), shapes (circle and square)—can be used as a binary code. For example, here are the words “Science Friday Rules!” written in binary using peas and carrots:

Why Is Binary Code Such a Big Deal?

“Computers, it is often said, manipulate symbols. They don’t deal with numbers directly, but with symbols that can represent not only numbers but also words and pictures. Inside the circuits of the digital computer these symbols exist in electrical form, and there are just two basic symbols – a high voltage and a low voltage. Clearly, this is a marvelous kind of symbolism for a machine; the circuits don’t have to distinguish between nine different shades of gray but only between black and white, or in electrical terms, between high and low voltages.” Copyright © 1981 by John Tracy Kidder. Reprinted by permission of Little, Brown and Company, New York, NY. All rights reserved.

The Ultimate Parallel Processor: Quantum Bits

Extension: Does Bit Number Matter?

Arranging and reading bits in ordered groups is what makes binary exceptionally powerful for storing and transmitting huge amounts of information. To understand why, it helps to consider the alternative: what if only one bit was used at a time? Well, you’d only be able to share two types of information—one type represented by 0 and the other by 1. Forget encoding the entire alphabet or punctuation signs—you just get two kinds of information.

But when you group bits by two, you get four kinds of information:

00, 01, 10, 11

By increasing from two-bit groups to three-bit groups, you double the amount of information you can encode:

000, 001, 010, 011, 100, 101, 110, 111

While eight different kinds of information are still not enough for representing a whole alphabet, perhaps you can see where the pattern is headed.

Using any binary code representation you’d like, try to figure out how many possible combinations of bits you can make out using bits grouped by four. Then try again using bits grouped by five. How many possible combinations do you think you can get using six bits at a time, or 64? By grouping single bits together in larger and larger groups, computers can use binary code to find, organize, send, and store more and more kinds of information.

Kidder drives this point home in The Soul of a New Machine:

“Computer engineers call a single high or low voltage a bit, and it symbolizes one piece of information. One bit can’t symbolize much; it has only two possible states, so it can, for instance, be used to stand for only two integers. Put many bits in a row, however, and the number of things that can be represented increases exponentially.”

As computer technology has advanced, computer engineers have needed ways of sending and storing greater amounts of information at a time. As a result, the bit-length used by computers has been growing steadily over the course of computer history. If you have a new iPhone, it is using a 64-bit microprocessor, which means that it stores and accesses information in groups of 64 binary digits—which means that it’s capable of storing 264, or more than 18,000,000,000,000,000,000 unique 64-bit combinations of binary integers. Whoa.

This idea of coding information with more bits at a time to improve the power and efficiency of computers has driven computer engineering from the beginning, and still does. Though this excerpt from The Soul of a New Machine was first published in 1981, the basic principle of encoding information in binary code with increasing complexity is still representative of the progression of computational power today:

“Inside certain crucial parts of a typical modern computer, the bits – the electrical symbols – are handled in packets. Like phone numbers the packets are of a standard size. IBM’s machines have traditionally handled information in packages 32 bits long. Data General’s NOVA and most minicomputers after it, including the Eclipses, deal with packages only 16 bits long. The distinction is inconsequential in theory, since any computer is hypothetically capable of doing what any other computer may do. But the ease and speed with which different computers can be made to perform the same piece of work vary widely, and in general a machine that handles symbols in chunks of 32 bits runs faster, and for some purposes – usually large ones – it is easier to program than a machine that handles only 16 bits at a time.”

Is Coding the Language of the Digital Age?

Donate To Science Friday

Make your year-end gift today. Invest in quality science journalism by making a donation to Science Friday.

Educator's Toolbox

Meet the Writer

About Ariel Zych

@arieloquentAriel Zych is Science Friday’s director of audience. She is a former teacher and scientist who spends her free time making food, watching arthropods, and being outside.